Hey, I'm back.

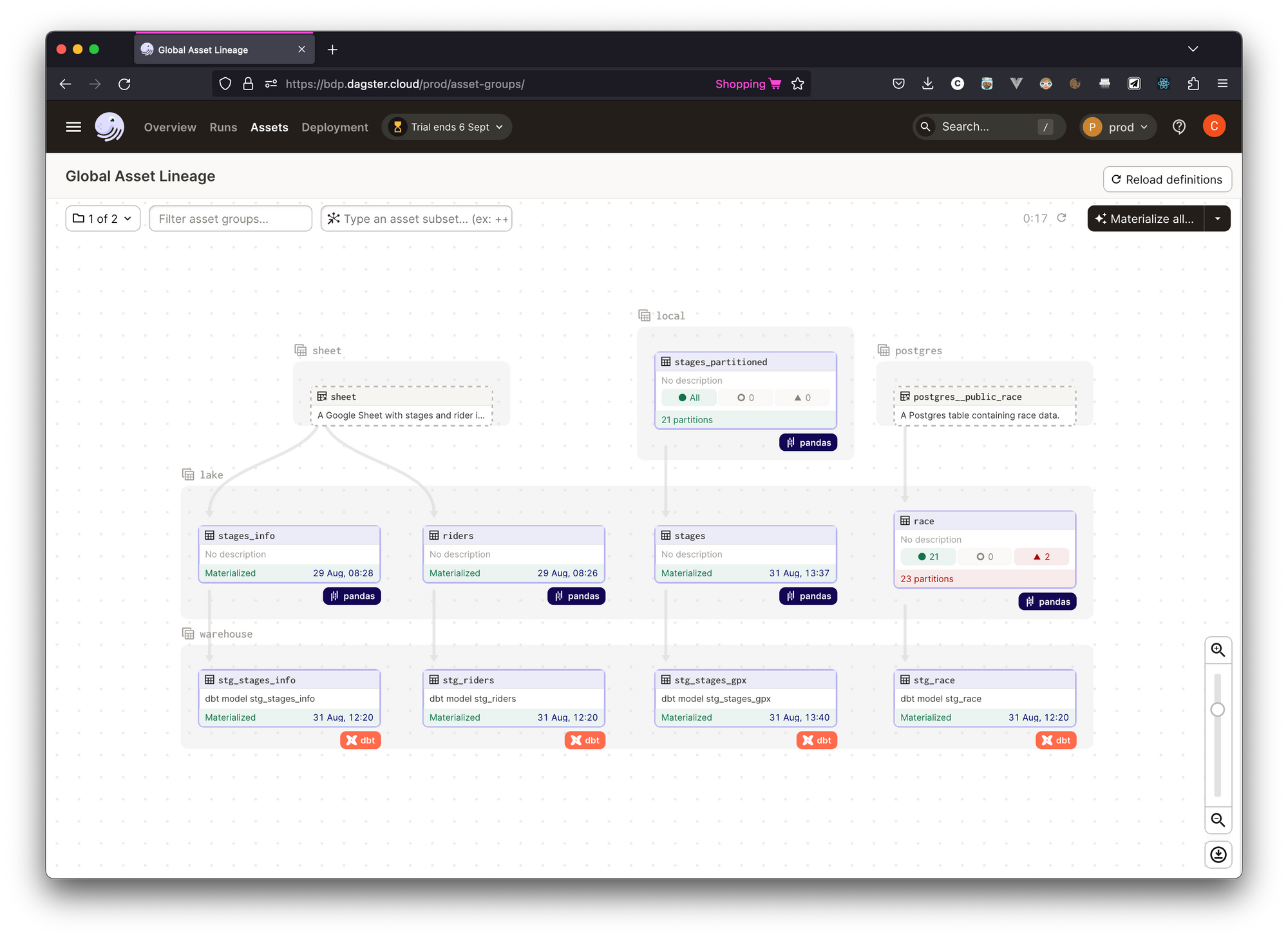

I've taken an unplanned 3-week break since the last Data News, let's be honest, it was necessary! I spent a few hours working on the fancy data stack project and articles are in the works, but it was idealistic to produce quality code and content while enjoying the summer. Like wine, it takes time to get it right. If you want a first glimpse of the Dagster code, you can look at it on Github, not yet documented but commits messages are clean.

On September 1, I'm still getting used to the school rhythm. A new year starts in September, new friends, new classes and new things. Even if, as an adult, things are different now. Data News is back, but with the same recipe: a weekly newsletter to let you catch up on the previous weeks' articles. I make the selection myself, I choose things I like while being under the others influence. But I'm not an influencer. I just create content.

This week features what happened in August, even if it was summer holidays, news, features and drama got the data world. Enjoy the news recap.

dbt tests 🧪

dbt Core proposition has been to bring software engineering practices to SQL development. Obviously testing is invited to the party, but tests are hard and everyone does and understands tests differently. There are unit, integration, functional and end-to-end tests.

This summer a lot of people wrote about testing with dbt.

- dbt tests: How to write fewer and better data tests? — Ari catalogs the kind of tests you can write with dbt. Do you want to test data or code changes? (this is the more important question tbh) Do you want to test schema changes, missing data, volume or value anomalies? He covers everything.

- An overview of testing options for dbt — Another exhaustive and less opinionated list about the options out there to write test on the data.

- A simple approach to implementing unit tests for dbt Models — Mahdi proposes a CTE nomenclature to create input and output in dbt models to unit tests them.

- dbt Core unit tests are coming — A discussion on Github about unit tests and fixtures definitions in YAML to tests models. If implement within dbt Core it would be the most awesome feature. Because hacking with seeds and custom macros looks nasty.

Generative AI 🤖

I haven't really been keeping up with the news because it moves too fast, but here are a few things that have stood out:

- Meta releasing models faster than before — Expanding DINOv2 a computer vision model (on X), releasing SeamlessM4T a multilingual multimodal translation model (on X), releasing Code Llama a LLM for coding.

- Snowflake fine-tuning Code Llama for SQL generation — With these fine-tuning it seems they are close to GPT-4 accuracy in text-to-SQL.

- Llama 2 is about as factually accurate as GPT-4 for summaries and is 30X cheaper —

- A French Youtuber released on Twitch a 24/7 AI deep-faking French presidents (Macron, De Gaulle, Chirac) answering the Twitch chat questions, but his channel got banned by a Twitch bot after AI-Macron said something illegal while answering a question about worst french cities. AI fights this is the future we want.

Fast News ⚡️

- Python into Excel — Microsoft and Anaconda announced Python coming into Excel. I'm bitter-sweet about it, on one side I don't think Excel is a good platform for software development, on the other side, let's be honest a face the truth Excel is the only data platform business users wants. Still the big winner of this is Microsoft, because Python code will run on Azure.

- After Excel, Notebooks get a second youth — Meta explained how they schedule Jupyter Notebooks in production, Google announced the BigQuery studio with embedded Notebooks in the UI and Jupyter released Jupyter AI (you call it with

%ai) to bring Gen AI to the notebook. - New features in Airflow — with 2.7 you get a Cluster Activity UI and with airflowctl new CLI you can spin up Airflow instances in a wink.

- Introducing the revamped dbt Semantic Layer — dbt Labs announced the Beta of the Semantic Layer which will be a paid product in dbt Cloud. I've already wrote a lot about the semantic layer and more is to come. So let's see where it goes.

- Introducing SOL: Sequence Operations Language — A new dedicated to to sequence analyses, which can be useful when working with web traffic data.

- Answering "Why did the KPI change?" using decomposition — If you are an analyst who needs to explains everyday why a metric increased or decreased, this article is for you. Max explores metrics decomposition for sum and ratio. This is brillant.

- Apache Hudi: From Zero To One (1/10).

Drama

- Instacart's Snowflake bills — When public companies publish results numbers are looked at. This time Instacart bills have been overlooked. While the company said it has spent $13m, $28m and $51m respectively for 2020, 2021 and 2022 in Snowflake spending and plan to spend $15m in 2023.

People supposed Instacart found the magic solution to reduce costs, others said it migrated to Databricks. But the main reason is: prepaid credits. The Snowflake press team even wrote a post.

Still you can watch the perfectly timed video about How Instacart Optimized Snowflake Costs by 50% or Snowflake optimisation at HelloFresh. - Hashicorp changed Terraform license model — Hashicorp decided to move from Mozilla Public License to Business Source License (BSL). BSL is source-available and not really open-source. Following the announcement OpenTF forked the repo.

Data platform stuff

4 articles that gives food for thoughts about the future of the data field.

- The uses and abuses of cloud data warehouses — A streaming database saying to a batch database: "you're not suited for operational use-cases, only analytical". The batch database answers one day later.

- Level-up with a Medallion architecture — bronze, silver, gold are the structuring layers of the Medallion architecture. Matt explains it for you.

- The data contract pivot in data engineering — It's a fancy name, but it aims to solves upstream data problems with a technical + process solution.

- After the modern data stack: welcome back, data platforms.

Data Economy 💰

- Hugging Face raises $235m in Series D. You can see Hugging Face like the Github of machine learning models, but it's much more today, this is a global platform to distribute AI—in every form possible. Obviously with the new popularity over Generative AI models HF is playing a key distribution role.

- Stemma has been acquired by Teradata. Stemma is a company that has been founded by ex-Lyft employees working on the company data catalog Amundsen. Mainly Stemma is built on top of Amundsen with Enterprise features. Consolidation.

- Rockset raises $44m in Series B. Rockset is a real-time search (and analytics) database aiming to replace Elastic. Like Elastic but in the cloud.

- Ikigai Labs raises $25m in Series A. Ikigai provides a web platform to do data transformations in a visual way on top of tabular data. You can do entity resolution or forecasting for instance.

- Elementl becomes Dagster Labs, to make it clear. I'm announcing soon blef Labs.

- The Information reported that Open AI will pass $1b in annual revenue "over the next 12 months".

Feels good to be back, see you next week ❤️. I hope you enjoyed your summer.